Pattern · Telecom · April 2026

Agentic AI on a thirty-year-old OSS, without ripping it out

How we wired an agent runtime into a telecom operator's legacy customer system using a custom protocol connector and reusable skill files — turning a read-only audit nightmare into a queryable, governable AI workspace for operations teams.

The situation

A North American telecom operator had been running a single customer system for over thirty years. The system held the operator’s billing relationships, account history, service records, and the bespoke business logic that decades of operations engineers had encoded into stored procedures, triggers, and a relational schema that nobody could fully diagram. Replacing the system was a credible multi-year, multi-million-dollar program. Several previous attempts had failed.

Operations teams — billing, support, dispute resolution — increasingly needed AI-assisted access to the system. Generic chat tools could not reach internal data; copying the data to a vendor cloud failed the operator’s residency requirements; opening the system to an inbound API was rejected by security. The operator needed agentic AI on the legacy system, on the legacy system’s terms.

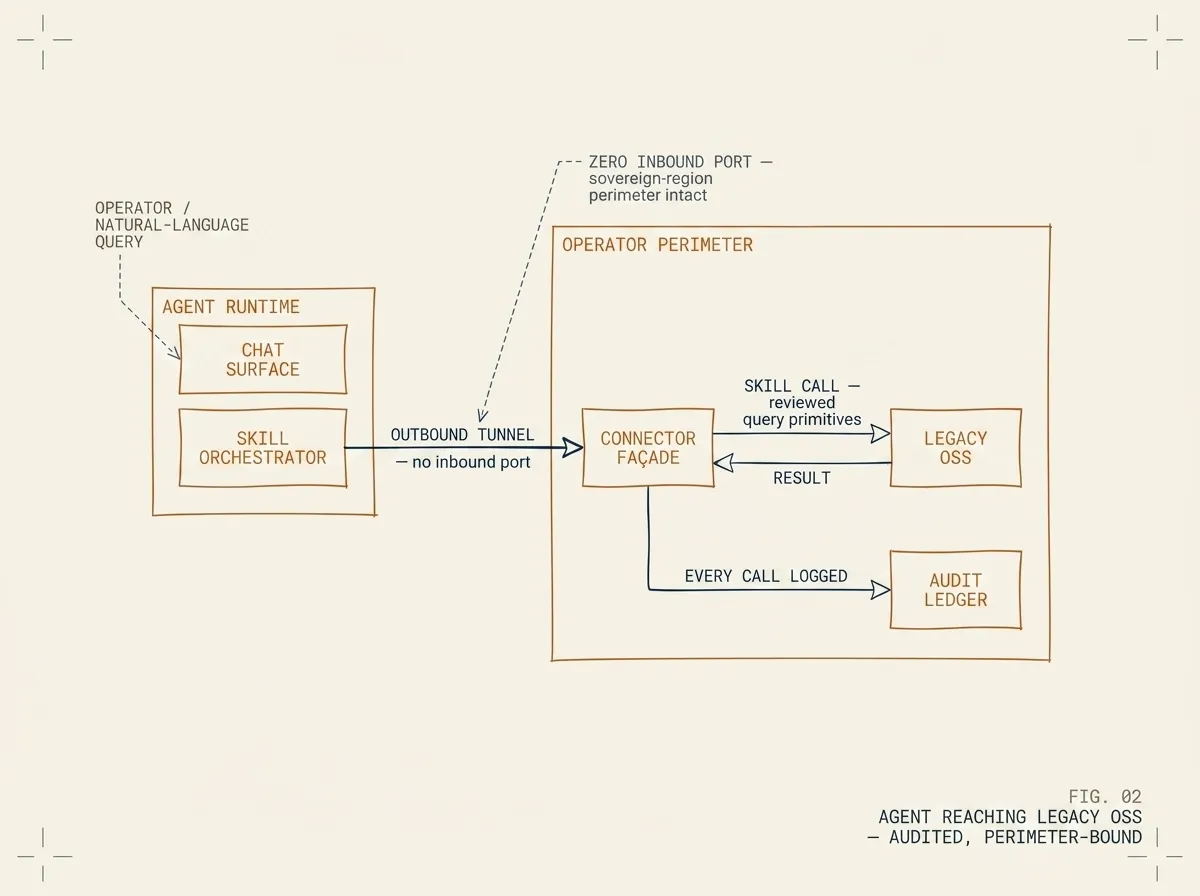

FIG. 02 / AGENT REACHING LEGACY OSS — the agent runtime is outside the perimeter; the OSS, the connector façade, and the audit ledger live inside it. A single outbound-only tunnel is the only crossing. Data does not leave the perimeter.

What we built

We wrapped the OSS in a thin façade: a small typed-language service that runs inside the operator’s perimeter and exposes the relevant tables and stored procedures as agent-callable tools. An agent runtime sits in front of it; an operator on the support team chats with the agent, which calls the tools to query the OSS on their behalf. The OSS itself was not modified — no schema changes, no new triggers, no new code in the legacy stack.

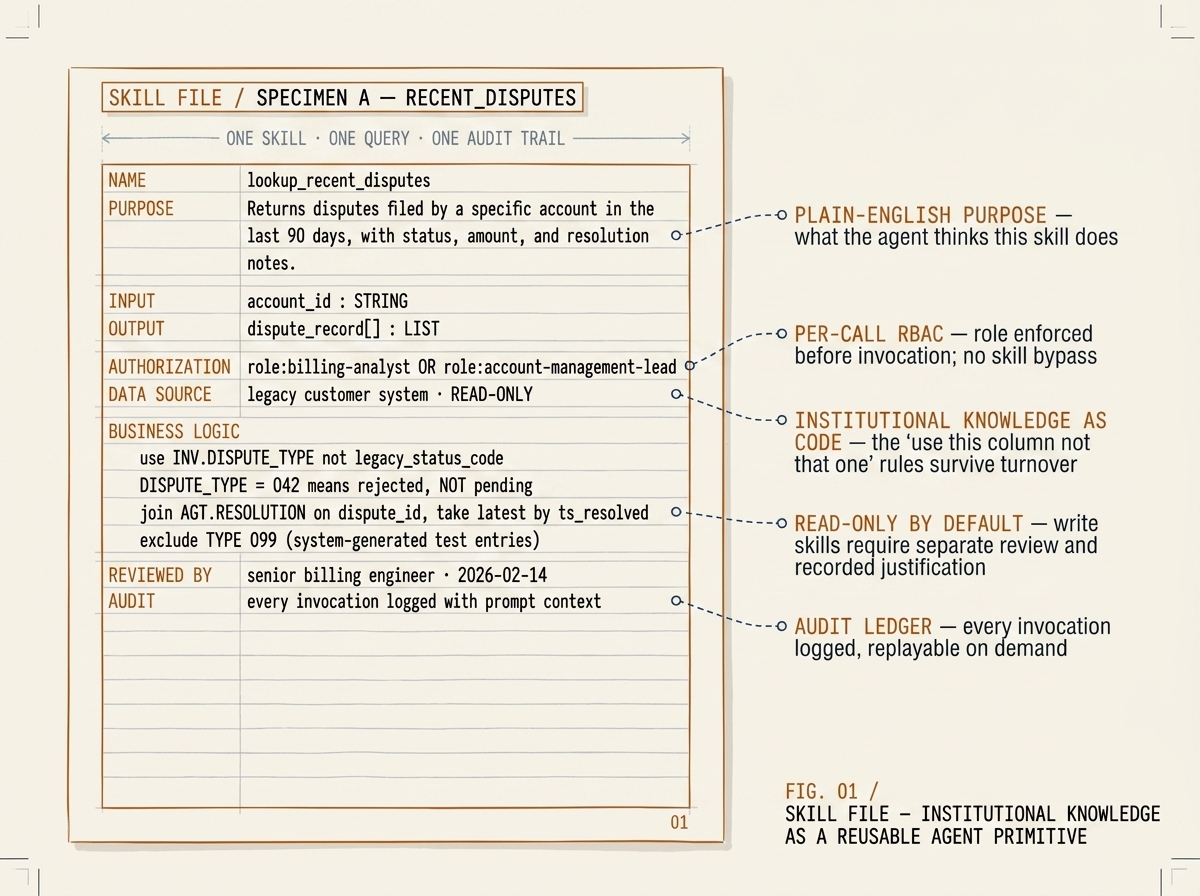

The hard work was on two layers above the connector. First, the skill files: each common operations workflow — “look up an account’s recent disputes,” “summarise a customer’s last six interactions,” “find tickets opened in the last 30 days that referenced this part number” — was encoded as a reusable skill file. The skills capture what experienced operations engineers know about the system: which join is the right one, which status code means “actually billed” versus “ready to bill,” which date column you trust. New team members got the institutional knowledge as code; the agent got reliable composition primitives.

Second, governance. Every tool call was logged with the prompt context, the parameters, and the result. The log was append-only and exportable to the operator’s existing security-information system. Read access was granted by default; write access was added later, per skill, after a review process. The agent could not invent a new query — it could only call skills the team had reviewed.

How we kept it safe

Three layers of safety. The connector ran inside the operator’s perimeter and dialled out to the agent runtime through an outbound-only tunnel — no inbound port. The tool surface was restrictive: each skill exposed exactly the parameters the operator wanted exposed; nothing else. Authorization was role-based via the operator’s existing single-sign-on; an agent invoked by a billing analyst could not call skills reserved for an account-management lead.

Read-only was the default for the first six months of production use. Write access was added per skill — first for low-risk operations like attaching notes to a ticket, then for higher-risk ones like updating a service status. Each write skill required a recorded justification on invocation; the audit log made the justification queryable.

What it produced

Operations engineers stopped writing queries for routine work. The pattern that took fifteen minutes of formulating a join, running it, and reformatting the result became a sentence in a chat. Onboarding time for new analysts dropped because the institutional knowledge was no longer tribal; it lived in the skill library. The audit trail satisfied the operator’s privacy and security teams — every query had a who, a what, and a result.

The OSS modernization program did not stop. But it stopped being the blocker on the AI use case.

What this pattern is good for

This pattern works when three things are true: the legacy system is too valuable or too expensive to rewrite in the near term, internal teams are ready to take on AI-assisted operations (not just AI-assisted demos), and the operator can invest in the skill-file authoring discipline that makes the agent reliable rather than impressive.

If the system is small enough to rewrite in a year, rewrite it instead. If the team is not ready to author and review skill files, the agent will produce convincing wrong answers. If the deployment cannot be sovereign — if the data has to leave the perimeter for the agent to function — this is not the right pattern. Otherwise: 8–12 weeks to a read-access pilot, six to nine months to write-access for the skills that warrant it.

Recognise this pattern?

Tell us about yours.

If your problem rhymes with this one, scoping a project usually takes us less than a week. References available under NDA.

Talk to an engineer →