Product · Orchestration & Runtime · Beta

IROS

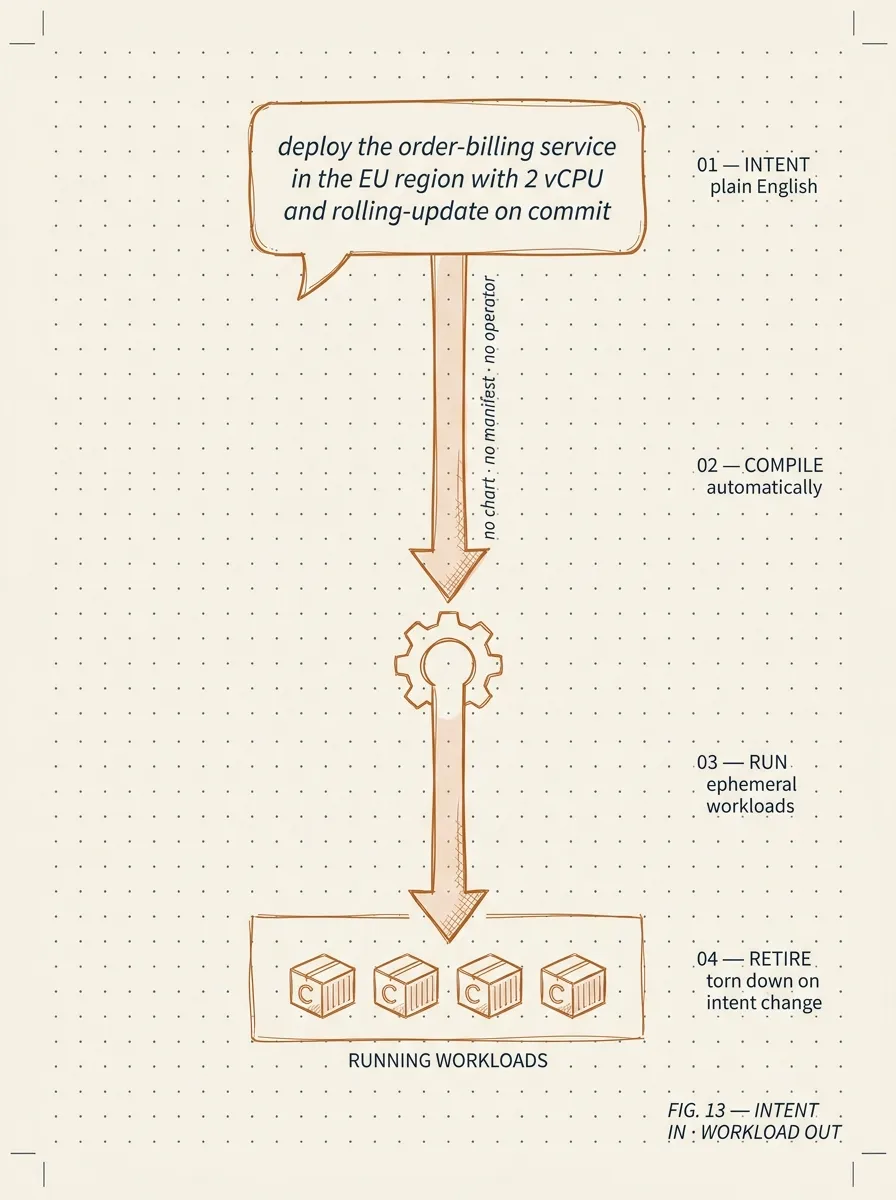

Intent Runtime OS. Compile a natural-language intent into a policy-validated, ephemeral container workload. The intent is the deployment unit — there is no chart, no manifest between you and the running workload.

The problem

Most container workloads only run for an afternoon. They don't deserve a manifest.

A modern operations team produces dozens of one-shot workloads a week — a data extract, a one-off migration, a scheduled enrichment, an ad-hoc enforcement check. Every one needs a base image, a command line, an environment, and a permissions decision. In a typical orchestrator that is a job manifest, a permissions binding, and someone's afternoon.

IROS replaces that ceremony with an intent. Type the intent in English; the runtime resolves it against a policy graph (which images are allowed, which secrets are reachable, which networks are in scope), schedules the workload on Odysseus, and tears it down when it's done. The audit log captures the intent, the policy decision, and the workload result.

How it works

Intent → policy → ephemeral container.

-

01 · Intent

"Run the nightly meter reconciliation against last week's data and write the report to the audit bucket."

Natural language, plus optional parameters. Submitted via CLI, API, or chat.

-

02 · Resolution

IROS resolves the intent against the policy graph.

Which image satisfies "meter reconciliation"? Which secret is the "audit bucket"? Which network has access? The policy graph is yours to author; the resolution is deterministic and auditable.

-

03 · Schedule

The resolved workload is submitted to Odysseus.

Container starts, runs, exits. Logs and exit code captured. No long-lived deployment object.

-

04 · Audit

Intent, policy decision, and result are recorded as one row.

A reviewer can answer "what ran, why was it allowed, and what did it produce" without stitching together kubectl logs.

Sovereign by default

IROS runs on Odysseus inside your environment.

The runtime is a small Go control plane. The execution layer is Odysseus — your container host of choice. The policy graph is yours. There is no SaaS, no phone-home, no shared cloud.

For agentic AI use cases IROS pairs with Maria: an agent submits an intent on behalf of a user; IROS resolves it under the user's policy; the workload runs ephemerally and reports back. The agent never touches the cluster directly.

Where it fits

Anywhere a manifest is overkill for the workload's lifetime.

Ephemeral CI/CD jobs

Build steps, integration test runs, deployment tasks — short-lived workloads that ran in compose or shell scripts because authoring an orchestrator manifest per job was disproportionate to the work. IROS turns the same job into an intent: describe it once, run it on demand, no leftover manifest to maintain.

Periodic data pipeline tasks

Hourly extracts, nightly transforms, weekly reconciliation jobs. Each task is a one-paragraph intent against a known data source. Policies bound the resource consumption and the output destination so a misbehaving pipeline cannot starve the rest of the platform.

AI-orchestrated workloads

Agents that need to spawn computational work on the user's behalf — running an analysis, processing a file, generating a report. The agent submits an intent; IROS validates it against policy; a short-lived container does the work; the result returns to the agent. No standing infrastructure per agent.

Preview and staging environments

Per-branch preview deployments, ephemeral staging environments for review, on-demand sandbox containers for support engineers. Each one compiles from intent rather than from a hand-maintained manifest, which makes the cost of standing one up trivial enough that nobody asks first.

Patterns that use this product

Worked examples from real engagements.

Government · Pattern

How we shipped AI workflow automation inside a Canadian municipality's tenant

Natural-language workflow building (Kacha) deployed inside the customer's tenant — no data leaves the perimeter, no license per workflow, no foreign cloud. From scoping to first workflow in production in under ten weeks.

8–12 weeks from scope to production

Telecom · Pattern

Agentic AI on a thirty-year-old OSS, without ripping it out

How we wired an agent runtime into a telecom operator's legacy customer system using a custom protocol connector and reusable skill files — turning a read-only audit nightmare into a queryable, governable AI workspace for operations teams.

8–12 weeks for a production read-access pilot

Cross-sector · Pattern

Replacing legacy field-service applications without disrupting operations

How we migrate operationally critical field-service applications onto the operator's existing enterprise platform — preserving the workflow, replacing the lock-in. Worked example: a continental field-service replacement that ran every shift through the cutover without missing a dispatch.

10–14 weeks end-to-end

Get started

The intent is the deployment unit.

Tell us what one-shot workloads run in your stack today. We'll set up an IROS pilot and you can stop writing Job manifests.

Request a pilot