01 · Agents

Composable workers with declared role + tools.

Each agent has an explicit role, a permitted toolset (MCP servers, internal APIs, retrieval indexes), and a model assignment. Composition is YAML; deployment is a CLI command.

Product · AI & Automation · Production

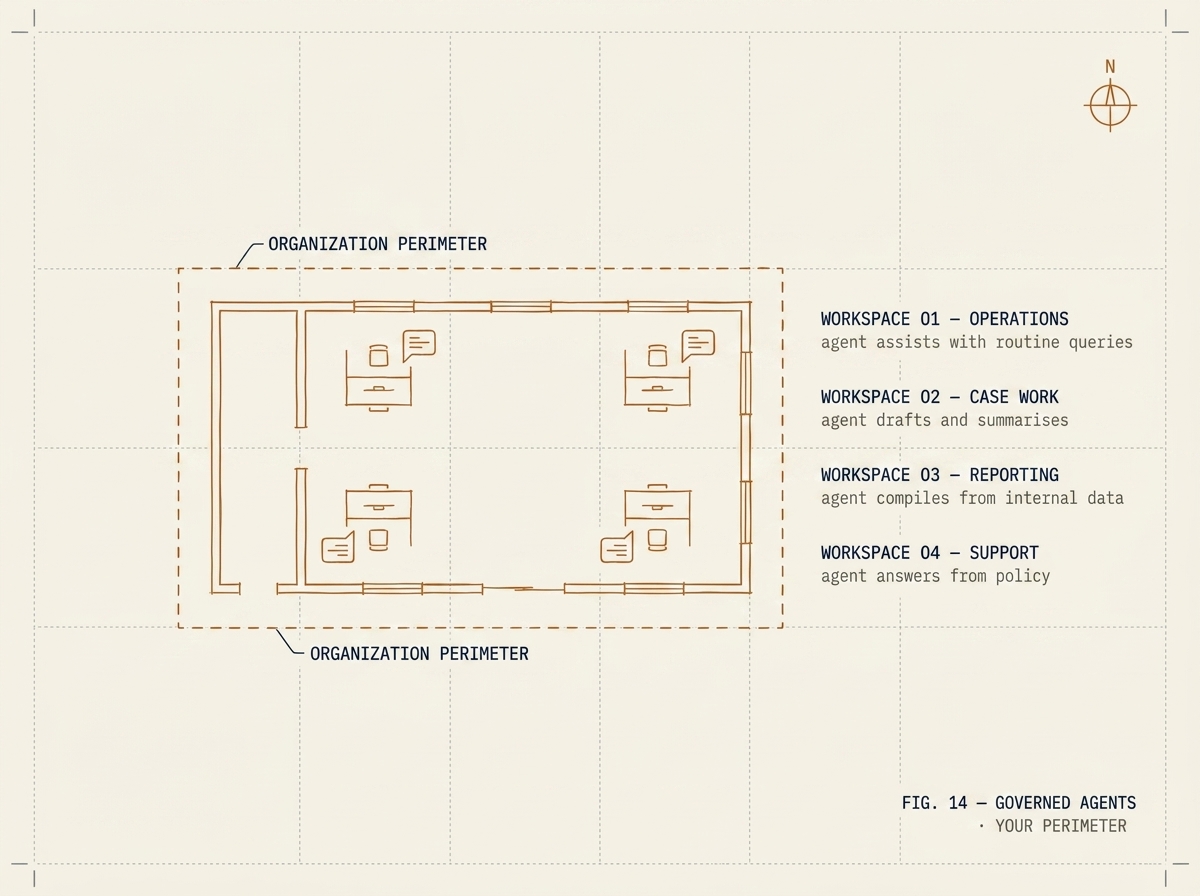

Enterprise AI orchestration. Compose, govern, and observe AI agents across your organization — without sending prompts or responses outside your perimeter.

The problem

The first AI pilot ships fast. It produces a chatbot, a summarizer, and a retrieval demo. Then governance asks: who ran what, against what data, and where did the prompts go? The vendor's audit log does not answer those questions, and the SaaS endpoint is in the wrong country. The pilot stops there.

Maria starts where that pilot ends. It is the orchestration plane that turns ad-hoc AI use into a governed capability — agents are composed once, authorized per role, and observed per tool call. Data residency is a deployment decision, not a vendor's policy.

How it works

01 · Agents

Each agent has an explicit role, a permitted toolset (MCP servers, internal APIs, retrieval indexes), and a model assignment. Composition is YAML; deployment is a CLI command.

02 · Tools & connectors

Tools are protocol-first. Native connectors for the enterprise platforms, productivity suites, geospatial systems, and SQL/NoSQL stores most organizations run. Custom connectors are a few hundred lines, not a few weeks.

03 · Governance

OIDC / SAML role mapping. Every tool call is logged with prompt context, parameters, and result. Logs are append-only and exportable for review.

04 · Observability

Standard metrics, distributed traces, and per-agent quality dashboards. Cost attribution by team, by workflow, by model.

Sovereign by default

Maria deploys as a containerized stack inside the customer's sovereign cloud tenant or on-premises. The control plane and the data plane both live in the customer's perimeter. There is no Maria SaaS — there is no shared cloud where customer prompts could end up.

Model inference uses whichever provider fits the use case: a Canadian-region frontier model, or an open model on the customer's own GPU. Maria knows which model is in scope per agent and routes accordingly.

Patterns that use this product

Government · Pattern

Natural-language workflow building (Kacha) deployed inside the customer's tenant — no data leaves the perimeter, no license per workflow, no foreign cloud. From scoping to first workflow in production in under ten weeks.

8–12 weeks from scope to production

Telecom · Pattern

How we wired an agent runtime into a telecom operator's legacy customer system using a custom protocol connector and reusable skill files — turning a read-only audit nightmare into a queryable, governable AI workspace for operations teams.

8–12 weeks for a production read-access pilot

Get started

Tell us what you'd put an agent to work on. We'll come back with a 30-day deployment plan and a governance model your CISO will sign off on.

Talk to an engineer